Blog

Tutorials

A Practical Guide to Turning Design Into Code 2026

Transform your development process with our guide on turning design into code. Learn AI-powered workflows and proven strategies for a seamless transition.

Nafis Amiri

Co-Founder of CatDoes

Getting your design into code is the final bridge between a creative vision and a working application. It's the process of taking mockups from tools like Figma and turning them into interactive code. Today, AI-native platforms handle that translation automatically, cutting weeks of manual work out of the process.

TL;DR

Modern AI platforms turn Figma designs or plain-English prompts into production-ready code in minutes instead of weeks.

Output quality depends on your design system, layer naming, and prompt detail. Garbage in, garbage out.

Plan to manually refine about 20% of the generated code. Complex logic, performance tuning, and niche API integrations still need a human.

Platforms like AI app builders can also generate a backend (database, auth, APIs) in the same flow.

Table of Contents

The Modern Way to Turn Design Into Code

Preparing Your Designs for the Handoff

How AI Agents Build Your App

Refining and Customizing the Generated Code

Integrating a Backend and Launching

Frequently Asked Questions

The Modern Way to Turn Design Into Code

The path from concept to deployed app used to mean a handoff between designers and developers filled with friction and back-and-forth cycles. Modern tools close that gap with automation.

Instead of developers recreating every button, screen, and interaction from a static design file, the system interprets the design and generates production-ready code.

Why the Shift Is Happening Now

Demand for digital products keeps growing, and recent advances in machine learning have made it possible to automate work that once required years of specialized skill. That combination is why every major design tool now ships a code-export or AI-generation feature.

Traditional vs AI-Powered Workflow

Phase | Traditional | AI-Powered |

|---|---|---|

Handoff | Manual export of assets and redlines | Direct import from Figma |

UI development | Engineers write HTML/CSS/JS component by component | AI generates code for components and screens in minutes |

Iteration | Design changes require a full loop back to developers | Design changes update the code automatically |

Time to prototype | Weeks or months | Hours or days |

Developer focus | Tedious UI implementation | Business logic, performance, backend |

The benefits of this approach:

Faster time-to-market. Automated code generation shaves weeks off development timelines.

Fewer communication gaps. Translating the design file directly removes the risk of a developer misinterpreting the designer's intent.

Access for non-technical creators. Founders without coding experience can ship working apps.

Better use of engineering time. Developers focus on backend logic and performance instead of UI implementation.

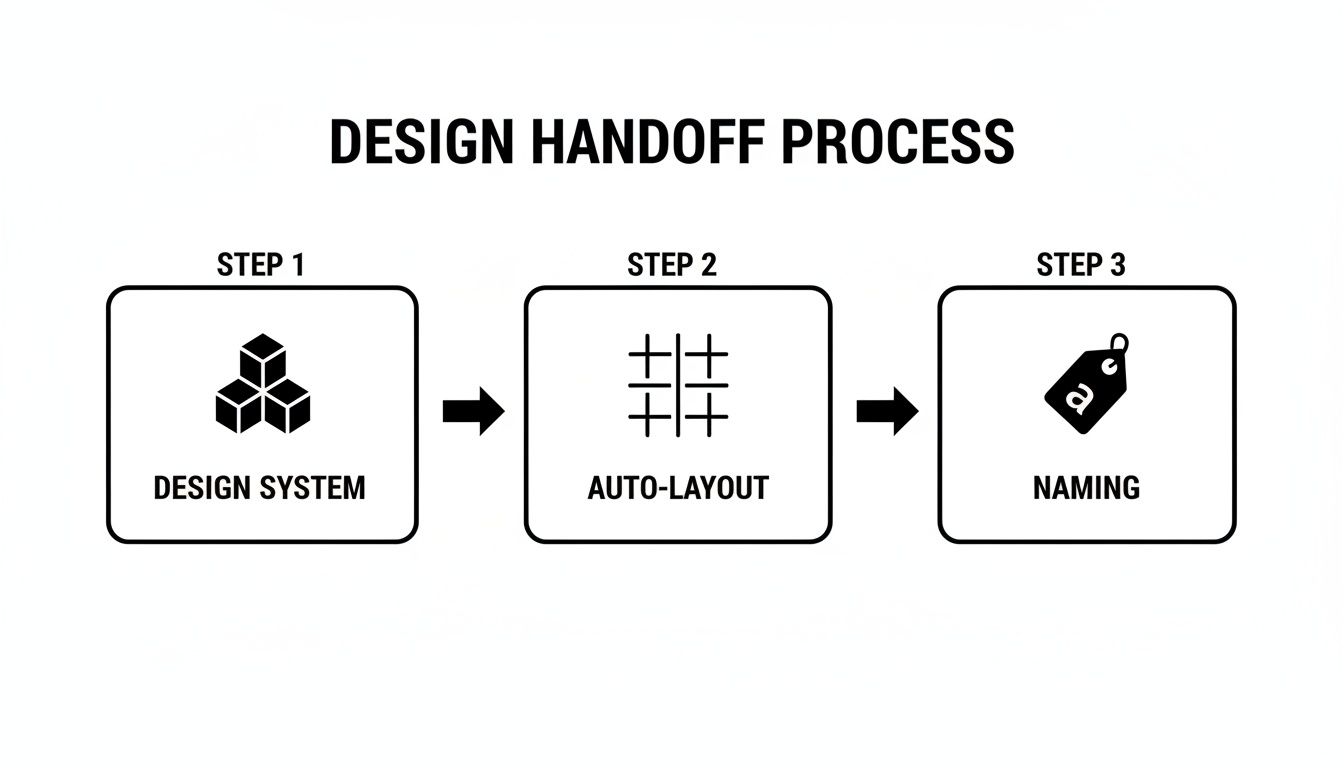

Preparing Your Designs for the Handoff

The quality of your final code reflects the quality of your initial design. Whether you're starting from a Figma mockup or a text prompt, preparation separates a clean conversion from a messy one.

Build a Consistent Design System

A design system is your single source of truth: a library of reusable components, defined styles, and clear guidelines. When an AI tool scans your design, it looks for these patterns first.

A solid design system includes:

Color palette. Primary, secondary, and accent colors defined once and applied everywhere.

Typography scale. Rules for headings, body text, and labels used uniformly.

Component library. Buttons, input fields, cards, and nav bars built as reusable components with defined states.

Spacing and grid. Consistent margins, padding, and layout rules.

Without this, the AI may treat three buttons in near-identical shades of blue as three separate elements, bloating the output with redundant code. With it, the system recognizes them as one "Primary Button" component.

Use Logical Layer Names and Hierarchy

How you name and group your layers in Figma matters. AI doesn't see your design the way a person does. It reads the underlying structure.

Compare two versions of a simple user profile card.

Poorly structured:

Rectangle 1

Image 3

Text 5

Text 6

Group 12

Well-structured:

ProfileCard (Frame)

Avatar (Component)

ProfileImage (Image)

UserDetails (Group)

UserName (Text)

UserHandle (Text)

FollowButton (Component)

The second version gives the AI context. It knows ProfileCard is the parent container and can infer the purpose of each nested element, which translates into more readable code.

If you're working from Figma, Dev Mode is the feature that exposes these layer and component details to developers and AI tools. Designer Jesse Showalter walks through its key features in this 14-minute tutorial:

Starting From a Prompt Instead of a Design

If you're starting with just an idea, your prompt is your design file. A good prompt is specific and context-rich.

Instead of "Make a fitness app," try:

Create a mobile app for tracking home workouts. Primary color: deep navy blue, with coral accents for buttons and links. Include a dashboard showing today's workout, weekly progress, and a log of completed exercises. Users sign up with email, browse a video workout library categorized by muscle group, and run a timer during sessions. Feel: modern, clean, motivating.

That level of detail gives the AI designer agent what it needs (color theme, screen list, feature set) without ambiguity.

How AI Agents Build Your App

Modern design-to-code platforms use a multi-agent system. Instead of one generalist AI doing everything, the work splits among specialists, mirroring a human dev team's structure at software speed.

The Multi-Agent System

When you submit a design or prompt, each agent takes a distinct role:

Requirements agent. Acts as a product manager, extracting features, user flows, and goals from your input.

Designer agent. Sets the theme, color palette, typography, and visual foundation.

Software agents. Take the design specs and write code, typically in React Native Expo so the output works on iOS and Android simultaneously.

Live Preview and Iteration

You can guide the process in plain English. Type "Make the login button bigger and change its color to the main brand blue," and the agents adjust both the design and the code. Changes appear instantly in the preview.

Most platforms give you a live preview in the browser and a QR code to load the working app onto your iPhone or Android device. That lets you test usability and responsiveness like a real user, without waiting for a build.

Refining and Customizing the Generated Code

AI-generated code gives you a head start, but it's rarely the final version. The polish comes when a human expert steps in.

Treat the output like a first draft from a competent junior developer: well-structured and functional, but needing senior review. According to the JetBrains 2025 State of Developer Ecosystem report, 85% of developers now use AI tools regularly and 62% use them every day. That adoption is what makes this hybrid workflow the new default.

When You Need to Step In

Plan to intervene for:

UI polish. The AI gets close, but minor alignment and spacing fixes are common.

Complex business logic. Intricate state management or custom algorithms usually need manual coding.

Performance tuning. Optimizing rendering, network requests, and animations.

Niche API integrations. Specialized third-party services often need specific configurations and error handling.

Syncing with GitHub

Instead of downloading a static ZIP, you can sync the project to a dedicated GitHub repository. Our guide on the Figma to React workflow explains how a synced repository makes component management easier.

The full cycle:

Generate and sync. The AI platform creates the initial app and pushes it to a new GitHub repo.

Clone and customize. Pull the repository to your local machine and open it in your editor.

Refine. Write custom business logic, fine-tune the UI, and add features the AI couldn't generate.

Push and continue. Commit your changes back. The platform stays in sync so future modifications don't overwrite manual work.

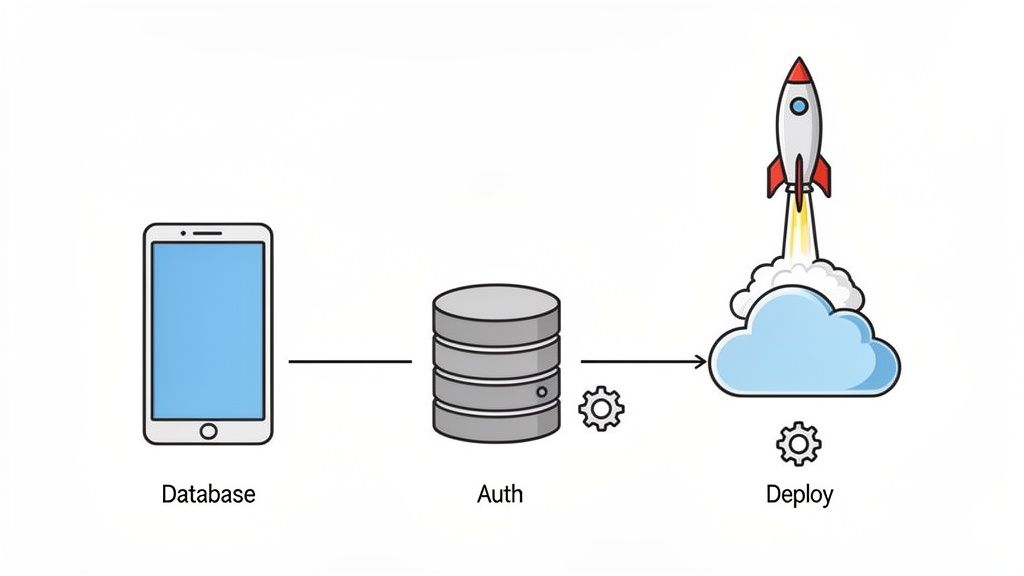

Integrating a Backend and Launching

A finished app needs a backend: data storage, user accounts, and logic that drives core features. Modern AI platforms handle the backend too, giving you a full-stack solution from a single conversation.

Generating the Backend From a Prompt

Modern platforms integrate with Backend-as-a-Service providers like Supabase. When you describe features like "user login" or "a feed of posts," the system provisions the actual backend infrastructure:

Database schema. Tables and relationships for user profiles, content, and app data.

User authentication. Sign-up, login, and password reset flows connected to the UI.

Server-side logic. Serverless functions and API endpoints for basic operations.

Front end and back end stay in sync from the start. For a deeper look at how this works, see our guide on the role of backend services in AI no-code apps.

From Working App to Live Product

Once the app is functional, an automated build-and-release agent handles deployment. It manages:

Code compilation. Bundling your React Native code into optimized packages for iOS, Android, and web.

Asset management. Packaging every image, font, and icon correctly for each platform.

App store preparation. Generating the builds for Apple App Store and Google Play submission.

Web deployment. Pushing the web version to a hosting provider at a live URL.

That gives you a repeatable path from finished app to live product without fighting deployment scripts.

Frequently Asked Questions

How accurate is the code from a Figma design?

Accuracy depends on the quality of your design file. With a clear design system, consistent components, logical layer names, and proper auto-layout, modern platforms generate code with near pixel-perfect fidelity. Expect minor manual tweaks for final polish. Treat the output as a production-ready starting point a developer can refine, not a hands-off final product.

Can I really do this with zero coding experience?

Yes. Modern platforms are built for non-technical founders and creators. You describe your idea in plain English and AI agents handle both the design and the code. You can:

See a live preview in your browser as the app is built.

Suggest changes naturally, like "make this button bigger."

Test the app on your phone with a QR code.

For MVPs and internal tools, you can often go from idea to launched product without hiring a developer.

What kinds of apps work best?

The workflow fits projects where speed and iteration matter most:

MVPs for startups testing an idea with real users.

Internal tools like dashboards, CRMs, or inventory management apps.

Customer-facing apps for small to medium businesses: e-commerce stores, booking platforms, informational apps.

Apps that need deep hardware integrations, intense graphics processing (like high-end games), or operate in heavily regulated industries will need more hands-on coding.

How does backend integration work?

Platforms like CatDoes offer optional backend integration with Supabase alongside the front end. When you describe features that need a backend (user logins, saving data, social feeds), the AI provisions:

A database with tables for your app's data.

Authentication for sign-up and login.

API endpoints your front-end code needs to talk to the server.

That turns the tool from a UI generator into a full-stack development platform.

Ready to turn your idea into a real app? With CatDoes, you can go from a prompt or Figma file to a production-ready mobile app in hours. Start building for free on CatDoes today.

Nafis Amiri

Co-Founder of CatDoes